Speech-to-Text: Artificial Intelligence for Genuine VoC

Siri

Chances are, you have already heard about Apple’s infamous iPhone personality. Through extensive marketing campaigns, Apple unveiled Siri, a feature that allows users to interact with their phone by speaking to it. Yes, that’s right, by speaking to your phone.

Say you wanted to know what the weather was going to look like, all you would have to do is say to your phone, “Weather.” Your phone then proceeds to reply with the weather forecast in your area. Want to check how busy your day is? Just ask, “What’s my day look like?” Your phone will then report your day. Is Siri the beginning of pocket artificial intelligence? It (she?) very well may be.

Why am I talking about Siri? Because Siri demonstrates the real-life application of some very cool speech-to-text technology. Speech-to-text is the ability of a computer to transcribe spoken language into usable text. This type of technological ability is very new but maturing rapidly.

Watson

Yet another famous piece of artificial intelligence that you’ve likely heard of, Watson is an IBM supercomputer that gained wide acclaim by appearing on Jeopardy! and defeating two of the game show’s top champions, Ken Jennings and Brad Rutter.

Over three days of trivia, Watson racked up $77,147, whereas Ken and Brad took in $24,000 and $21,600 respectively. As the Jeopardy! clues were being displayed visually to the other two contestants, Watson was receiving them through a direct text feed. Once the text was input, Watson didn’t just recognize the words, he (it?) understood how they related to each other. With that understanding, he analyzed 200 million pages of information to find the correct answer—all in the time it takes to sneeze.

Is Watson the first step toward machines taking over the world? Quite the opposite, actually. The IBM technology that fuels Watson has the potential to be one of this generation’s greatest allies. It’s already being used to improve the medical diagnosis process by combining symptoms, family history, current medications, doctor’s notes, and other information to suggest a diagnosis.

Speech-to-Text

If you want the analytical powers of a supercomputer like Watson (and, trust me, you do), you need text. In a world that still communicates verbally first and written second, we need more than a computer with Watson’s brain; we need one with Siri’s ears.

That’s the role of Speech-to-Text. Combine an advanced speech-to-text engine with an analytical supercomputer and you have the key to immense possibilities. In an article written by Jon Gertner and published in Fast Company, the author builds on the words of IBM’s chief of research, John Kelly, to point out this very thing:

“IBM executives have come to believe that Watson represents the first machine of the third computer age, a category now referred to within the company as cognitive computing. As Kelly describes it, the first generation of computers were tabulating machines that added up figures. ‘The second generation,’ he says, ‘were the programmable systems—the mainframe, the first IBM 360, PCs, all the computers we have today.’ Now, Kelly believes, we’ve arrived at the cognitive moment—a moment of true artificial intelligence. These computers, such as Watson, can recognize important content within language, both written and spoken. They do not ask us to communicate with them in their coded language; they speak ours.”

InMoment

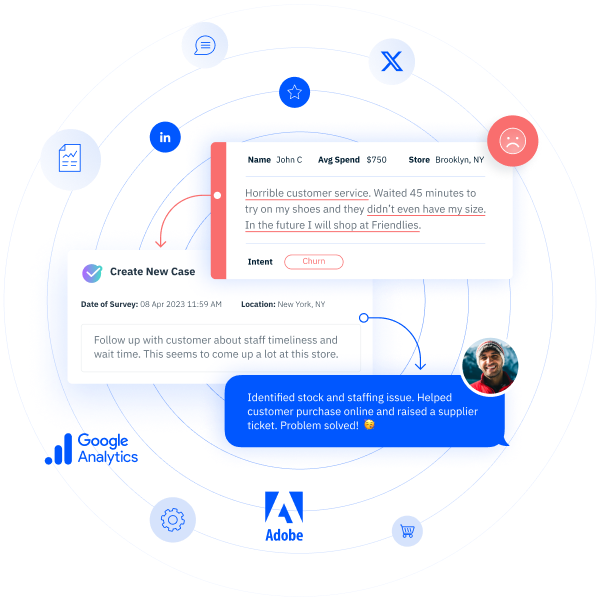

We live and breathe the voice of the customer (VoC) trade every day. We collect millions of surveys every month from the individual customers of our clients. We have held the benefits of speech-to-text and IBM Watson technology in our hands. And they are stunning.

Using these universal-grade systems, our ability to analyze customer surveys and reviews for real-time, actionable insights has leapt forward to include all unstructured, unsolicited feedback across multiple written and spoken world languages.

Let me walk you through just one scenario: a phone survey. Last year, we collected 113,000 phone surveys for one of our clients. To put that in perspective, it would take that client 1,000 hours a year to listen to every survey—that’s one full-time employee listening to surveys non-stop for six months, and that doesn’t even take into account the time involved with organizing, analyzing, and reporting the results!

Is this how you plan on hearing your customers? Is it even worth it to listen to your customers anyway?

Yes. It is. The reality is, you can’t afford not to. When business analysts are telling you to care about every single one of your customers, they are not just speaking ethically, they are speaking financially. The quality and quantity of research on the matter has now made it undeniable.

Using Forrester’s customer experience index (CXi), for instance, statistics bear out the fact that U.S. businesses maintaining above-average CXi scores make millions, if not billions, more each year than businesses maintaining below-average CXi scores. The key to a high CXi? Listening and responding to customer feedback (Forrester, “The Business Impact Of Customer Experience, 2012,” referenced here). Recent studies have also shown that customer retention efforts are more profitable than those to acquire new customers (Bain & Company, “The Economics of Loyalty”).

At InMoment, we provide the tools to capture and use the voice of the customer in real time. This includes both speech-to-text and IBM analytics, two of the most powerful technologies the business world has ever seen.

Your customers expect to be heard individually and addressed personally. The Speech-to-Text and IBM content analytics we use at InMoment make that possible.