Survey Response Incentives: What to Know About Improving Customer Engagement

Do survey incentives lead to higher response rates or biased results? Get insights into when and how to use incentives effectively.

“Is our response rate too low?”

“What can we do to improve it?”

“Should we provide an incentive for people to respond?”

As a customer experience (CX) leader, these are all questions you’ve likely faced many times before. However, these relatively simple questions have somewhat complex answers.

Survey incentives do encourage some people to offer feedback, which could mean more responses and diverse insights for your brand. However, they might not provide the answers CX teams need to improve customer experiences.

They may attract the wrong respondents, influence feedback, or result in superficial responses—and planning, budgeting for, and implementing incentives can be a challenge.

Here, we’ll look at the value of survey incentives to help you decide if they’re what your CX team needs. We’ll also explore ways to boost your survey response rates without incentives, plus considerations to make before rolling out an incentives program.

How Much Do Incentives Actually Increase Survey Response Rates?

Survey incentives are rewards or forms of recognition that can encourage your target audiences to participate in and complete surveys. And there’s no denying that they can boost response rates—in fact, studies show that just a small monetary incentive can increase survey participation by 25%.

However, incentives alone won’t reverse the trend toward declining survey rates. Additionally, getting a lot of responses doesn’t always translate to valuable insights for CX teams, especially when incentives are involved. As mentioned, incentives can attract people who are only interested in the rewards, impacting survey data quality.

Incentives could also influence how participants answer questions. Some may be overly complimentary, believing that a positive response is what earns the reward. Others may rush through your survey just to get the reward and not provide much meaningful feedback.

Increasing the Number of Responses vs. Improving Representativeness

Before you toss your current strategy out the window, ask yourself whether your goal is simply to get more responses or if you’re really looking for a wider range of responses. There’s a big difference between response rates and overall representativeness, so you’ll need to figure out which one you’re aiming for before you start making changes.

For example, if you’re getting plenty of responses about your SaaS platform from power users, but you’re only hearing crickets from the more casual users, that’s a representativeness issue. And if you tweak your approach to increase response rates, you might just end up with even more responses from power users.

Ultimately, the goal is to make sure your respondents actually reflect the broader customer base you’re trying to understand. More responses are nice, but meaningful responses—the kind that mirror your real audience—are what move the needle for your CX strategy.

How To Boost Survey Responses Without Incentives

The choice of whether to respond to a survey invitation is a cost-benefit decision for the customer. How much will completing the survey cost the customer, and will it outweigh the benefits they’ll receive?

At first glance, you might think there’s no cost to the customer to respond. But in reality, there are many costs, and they’ve been increasing over the past few decades. Potential costs might include:

- Time and Effort: Not only are people’s schedules busier nowadays, but they’re also getting more survey requests from a wide range of businesses. And many of those surveys demand a lot of thought and effort to complete.

- Hassle/Boredom: Some customers feel “duped” by agreeing to take what they think is a short survey—only to find that it’s long and tedious.

- Potential for Loss of Privacy: With data breaches constantly making the news, many customers worry their information will not stay confidential.

- Potential of Being Put on Numerous Mail/E-mail/Phone Lists: Ever filled out a form online and were immediately overwhelmed by spam calls, texts, and emails? So have your customers, and they’re not interested in repeating that experience.

Given these costs, your focus shouldn’t always be on offering incentives—they may not be enough to offset the costs and encourage participation. Instead, your team needs to ask, “How can we improve the benefit-to-cost ratio for customers?” The answer? Lower their costs and increase their benefits.

Reducing the Customer’s Costs

To determine how to minimize customers’ costs, put yourself in their shoes. What would make participating in and completing surveys less demanding for you? Once you find your answers, adjust your survey to encourage completion.

Here are some excellent starting points:

- Coordinate customer touchpoints. Many companies inadvertently over-survey their customers because different departments or divisions conduct independent research programs.

- Make the task as easy as possible. Use multiple-choice, Likert scale, and yes-or-no questions, rather than open-ended free text ones, so customers can respond with a few clicks.

- Reduce your survey length, but be careful not to make it too short. Sometimes, customers can interpret a very short survey as the company not being interested in their opinions and just “going through the motions” of gathering customer feedback. Using a tool like Active Listening in your open-ended survey questions can help prompt better answers with fewer questions.

- Be specific about your data collection policies. Make it clear how you will and will not use survey information and the steps you take to keep customer data safe.

- Avoid “nice to know” questions. Businesses often take a “might as well” approach and tack on relatively unimportant questions. But including unnecessary questions just lengthens your surveys and can hurt completion rates.

- Avoid sensitive questions like income and sexual orientation unless they’re necessary and applicable to your offerings. If you have to ask them, explain to the customer why and what you plan to do with the information.

- Set clear fatigue rules, ensuring you space out surveys for individual customers to prevent disengagement or frustration.

- Switch out the long annual customer surveys for microsurveys. Your customers might spend the same total amount of time responding, but breaking it up into spaced-out segments feels less overwhelming and demanding.

These strategies reduce the need for incentives in feedback collection, which could mean more reliable insights and lower survey costs for your business.

Increasing the Customer’s Benefits

Emphasizing the survey value for the customer can also encourage responses, even without incentives. And it doesn’t have to be a grand gesture—simple acknowledgements like these can go a long way in making customers feel like responding is worth their time.

- Send customers “thank you” messages for participating in your surveys.

- Explain how their responses will directly lead to product or service improvements.

- Offer the option for a personalized follow-up to learn more about their unique experiences.

- Consider allowing survey takers to see other customers’ feedback. People are social beings and often want to know if their experiences are typical or atypical.

What To Consider Before Using Survey Incentives

As mentioned earlier, if you do offer incentives, you risk getting low-quality responses from participants who are just in it for the reward. So it’s better to start with the non-monetary methods listed above.

That said, if you try out the non-monetary strategies but see no improvement in your response rates, you could offer incentives to give customers a little nudge. But you need to be careful not to impact survey data quality—otherwise, you might end up on the wrong side of the FTC’s new rules regarding review ethics.

Some best practices to keep in mind when using incentives include:

Keep Incentives Small and Simple

The phrase “the bigger, the better” doesn’t apply to survey incentives. Giving out too-large incentives can lead to unreliable survey data by attracting people who just want the reward or making customers feel like they have to give positive feedback to “earn” it.

Large incentives could also bias your sample by encouraging lower-income individuals to respond at greater rates than higher-income individuals. This ultimately results in unreliable information, which is worse than no information, as it could lead you to invest in the wrong areas.

Rather than going big, offer small rewards that feel like a genuine thank you rather than a bribe. For example, you could offer $5 instead of promising a $50 cash incentive.

Ensure Incentive Value Is the Same for Everyone

Your incentive should be of equal value to everyone, regardless of their experience or relationship with your business. If you decide to send $1 with your mail survey as an incentive, make sure every customer receives the same amount. In other words, don’t offer high-value gifts to loyal customers and low-value ones to new customers.

Unequal rewards can introduce bias and reduce trust, not only affecting the reliability of responses but also impacting customers’ relationships with your brand.

Choose Incentives That Work for Everyone

Incentives like discount coupons, vouchers, and gift cards have two major problems. First, they are more valuable to people who intend to return in the future than those who are unlikely to return, which can bias your survey results.

Second, some survey takers may see them as “just another marketing ploy.” After all, they’re tied to the promise of returning to your business. For reliable results, look at your target population and offer incentives that appeal to every potential participant.

For example, if you’re a B2C brand, you might offer $5 in cash. But if you’re a B2B brand, you’ll need to get a little more creative—some respondents, like procurement teams, may be unable to accept direct incentives. Instead, you could offer to donate to a charity or local cause that resonates with everyone in your target group once you achieve your target survey completion rate.

When Is It Appropriate To Use Survey Incentives?

Survey incentives aren’t necessary in all scenarios. For example, you may not need them if you have highly engaged audiences or brand-loyal respondents. They may also not be necessary if non-monetary incentives, like appreciation messages, work well with your target audience.

However, there are some instances when using incentives may be appropriate, such as:

- Your surveys are part of a broader strategy to boost customer loyalty or encourage more retail purchases.

- Your focus is solely on increasing response volumes.

- You’re issuing transactional surveys—surveys tied to a specific event, like completing an appliance purchase—and want to encourage immediate feedback.

- You’re seeking feedback from customers with a shared cause—in this case, the promise of a donation can encourage more and higher quality feedback.

Common Incentives To Offer for Survey Responses

Ideally, you should go for non-monetary rewards whenever possible. However, if you determine that incentives are necessary for your business, here are some great options that could work.

Lottery Entry To Win a Relevant Prize Upon Return of the Survey

This encourages not only participation but also completion. Letting customers know that survey completion will serve as a lottery entry for a high-value prize adds an air of excitement, which could see you register higher completion rates.

This incentive is, however, only effective for certain types of surveys, such as telephone and online surveys, where customers can quickly provide their details to throw their hats in the ring.

It’s also worth noting that some jurisdictions have laws and regulations concerning the use of lotteries as incentives. To ensure compliance, hire a professional promotions management company to offer guidance and help manage the lottery.

Discount Coupons

Discount coupons can encourage participation while also driving future customer engagement and purchases, making them a great option.

However, as mentioned before, discounts may be more valuable to loyal customers and come off as marketing ploys to others. So they may not be the best option for all audiences. Offer them only if you plan to engage long-term customers.

Contributing to a Charity in the Customer’s Name

Donating to a charitable cause on behalf of survey respondents can increase participation among socially conscious individuals and B2B respondents who can’t accept “gifts” from brands.

If you choose this incentive, include several relatively different charities you can donate to. This way, customers can choose the specific causes they want to support.

Elevate Your Customer Survey Efforts With InMoment

Survey incentives can motivate some customers to offer feedback, but they can also affect the quality of that feedback, especially if they attract reward-driven respondents. So, before going the incentive route, consider non-monetary ways of improving participation rates or representativeness.

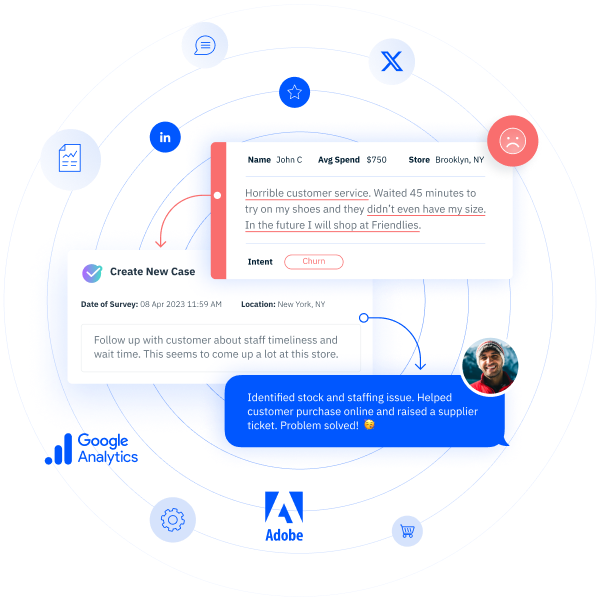

InMoment can support your feedback collection efforts with pre-built ADA-compliant survey templates. That means no more worries about whether your surveys are too short, too long, too complicated, or too vague. You’ll get the insights you need and the response rates you want.

Concerned about representativeness? InMoment can also trigger survey invitations from existing customer relationship management (CRM) systems, minimizing the risk of biased samples.

Schedule a free demo today and see how InMoment’s CX platform can take your survey response rates and quality to the next level!